A particularly hot topic at the moment, privacy is a core pillar of healthcare advertising. It is important for both pharma marketers and consumers to know that any health data is both well-protected and in the right hands. Thankfully for pharma marketers — who are already required to follow stringent regulations like HIPAA — there is differential privacy. This technology can significantly improve privacy standardswhile analyzing datasets.In order to understand the benefits, it’s key to understand the basics. What is it, and how does it work?

What Is Differential Privacy?

In 2006, four computer scientists published an article introducing the concept of differential privacy. They initially defined the concept as a mathematical definition for privacy loss associated with data extracted from a statistical database. This research examined and proposed a process that would protect the privacy of individuals within a dataset while still successfully extracting useful information from that dataset.Differential privacy works by adding pre-determined randomness into a computation performed by a machine learning algorithm. This creates a certain level of “noise,” adding extra information to the dataset to improve privacy but not to alter its findings.It is particularly useful when applied to large datasets– for example, as part of online advertising – where a single individual’s information is not a necessary part of the equation. If a dataset consists of two people, each person would contribute 50% to the dataset. However, in a dataset of 200 people, each individual represents just 0.5% of such dataset. In other words, the technology enables the algorithms to extract critical insights and generalized learnings from datasets without linking that information to a specific individual from the dataset.There are two approaches to differential privacy. First, central differential privacy refers to when noise is added to a dataset that has been collected as part of its analysis. Here, the entity aggregating data for analysis strips identifying information before aggregating the dataset. The company then inserts noise to reinforce its privacy measures. Alternatively, local differential privacy refers to noise added to a dataset on a device before the data is ever collected or received by a party aggregating and analyzing the dataset.

4 Real-World Examples

Differential privacy is a complicated concept with roots in cryptography. In order to truly understand how it works, it may help to see real-world examples. Here are four of them:

- Browsing history. Google introduced Randomized Aggregatable Privacy-Preserving Ordinal Response to Chrome in 2014. The search giant is able to collect probabilistic information about users’ browsing habits, drawing insights about how users interact with their browsers while protecting their sensitive information. Five years later, Google open-sourced its differential privacy , helping companies study their own data trends in a privacy-safe way.

- App usage. How do people use their smartphone apps? Local differential privacy helps Apple understand, adding noise to individual data before receiving it back. This includes everything from emoji usage to health information from the iOS Health app. With noise inserted locally, Apple evaluates this information while ensuring it can’t be traced back to a specific person.

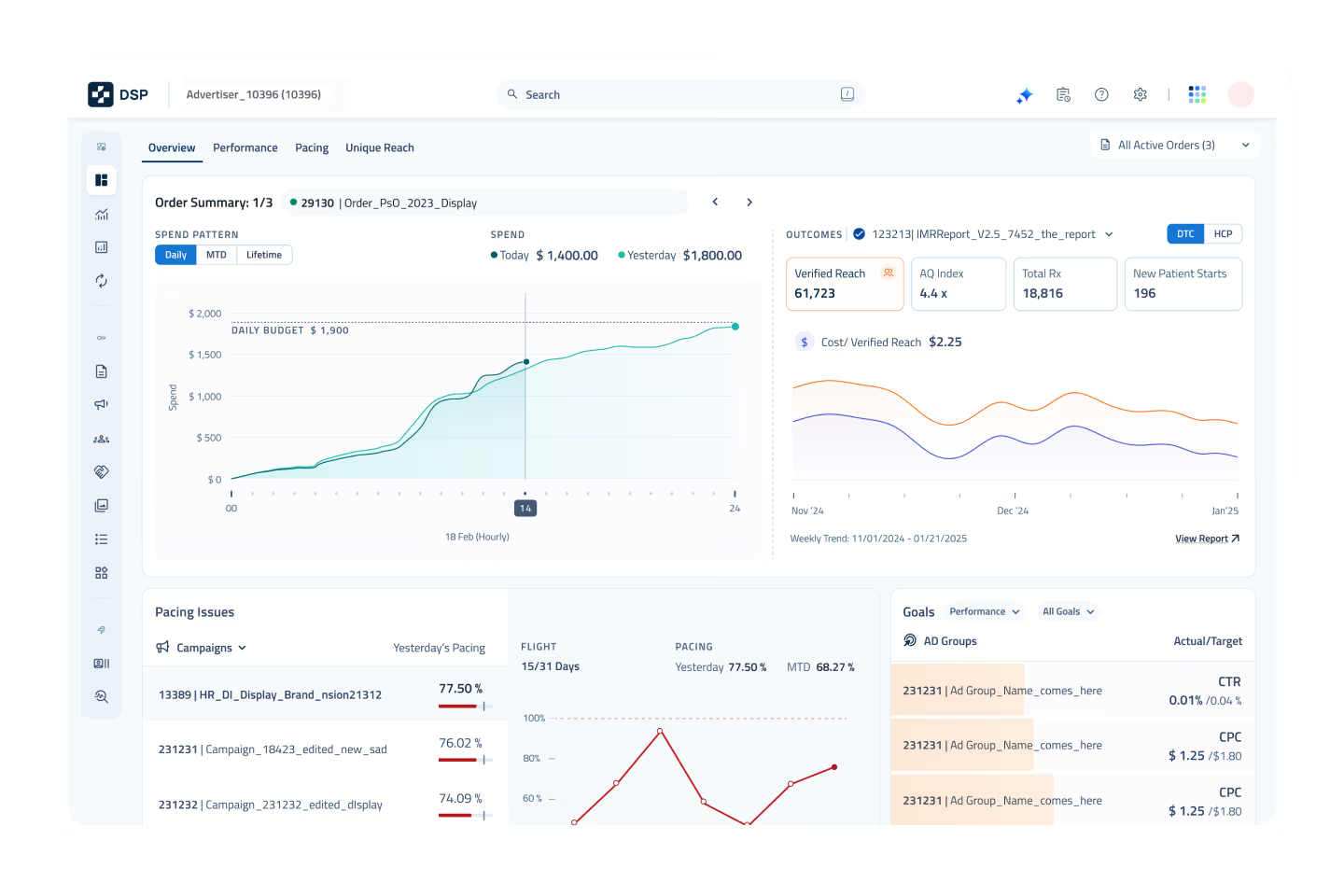

- Healthcare advertising. Differential privacy enables healthcare advertisers to build lookalike models for advertising campaigns, meaning they assess the type of demographics that might be relevant for a campaign and target audiences that have fit a similar profile. This allows advertisers to target messaging without relying on anyone’s sensitive information and, what's more, protecting the privacy of an individual’s information. Of course, this also allows advertisers to go beyond HIPAA compliance.

- Understanding census data. The U.S. Census Bureau started using the technology in 2020. The bureau reports the population of a state and the number of housing units in each census block as-is. However, noise is injected elsewhere so demographic information can’t be used to reveal anyone’s identity from the anonymized datasets.

To understand how DeepIntent leverages differential privacy to reach patient audiences in a privacy-safe way, click here . click here .